Vertical Divider

Stanford Develops CITL Procedure For Capturing Superposition Of All Diffracted And Undiffracted Light Of The Display

Augmented and virtual reality systems have the potential for a transformative impact on society by providing a seamless interface between a user and the digital world. Holographic displays implemented through near eye displays have to overcome the biggest remaining system challenges by improving the user experience and enabling more compact devices. Holographic displays offer a route to high-quality AR platforms, potentially delivered from a compact platform while maintaining large fields of view through the use of spatial light modulators (SLMs).

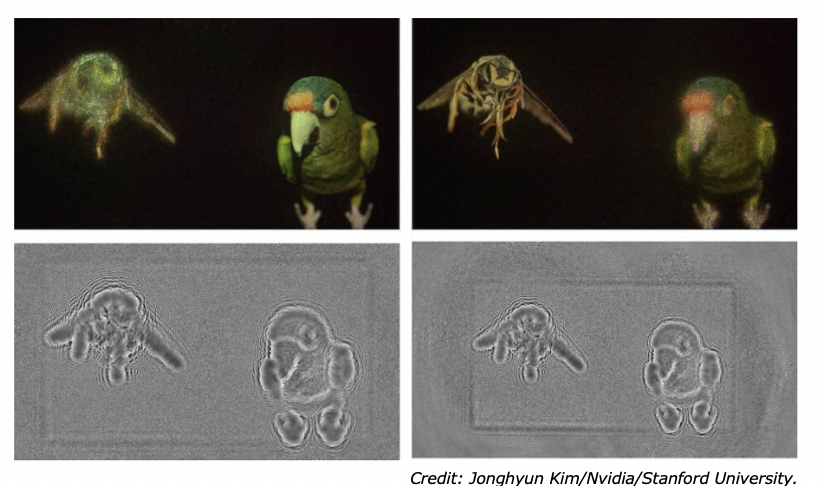

Figure 1: Captured near and far plane focal images acquired with the optimization process (top) and the two phase images used to create the hologram.

Augmented and virtual reality systems have the potential for a transformative impact on society by providing a seamless interface between a user and the digital world. Holographic displays implemented through near eye displays have to overcome the biggest remaining system challenges by improving the user experience and enabling more compact devices. Holographic displays offer a route to high-quality AR platforms, potentially delivered from a compact platform while maintaining large fields of view through the use of spatial light modulators (SLMs).

Figure 1: Captured near and far plane focal images acquired with the optimization process (top) and the two phase images used to create the hologram.

Although SLMs indirectly create a holographic image by shaping the wave field necessary to form a target image, high quality images are a challenge, due to low diffraction efficiency of SLMs. Any significant presence of undiffracted light interferes with the user-controlled diffraction orders and degrades the observed image.

Stanford University researchers developed a possible solution involving a technique termed Michelson holography (MH), named after its inspiration in Michelson interferometry. As described in Optica, the new system is an architecture in which two SLMs are employed. "The core idea of Michelson holography is to destructively interfere with the diffracted light of one SLM using the undiffracted light of the other," said Jonghyun Kim from Stanford University and partners Nvidia. "This allows the undiffracted light to contribute to forming the image, rather than creating speckle and other artifacts." The Stanford platform involves the design methodology termed camera-in-the-loop (CITL), a computational approach in which the deviations of a result from ideal light transport are assessed from images on a camera display and the findings fed back into the computation process. CITL allows holography to partially compensate for the undiffracted light of an SLM without having to explicitly model all of the terms involved, but the Stanford project pushed the principle further, by leveraging CITL optimization to enhance image quality of two phase-only SLMs, which mitigates the effect of undiffracted light in a fully automatic manner," noted the team in its published paper.

The Stanford CITL procedure captures the superposition of all diffracted and undiffracted light of the display, assesses the apparent error against a target image, and then backpropagates that error into both SLM patterns simultaneously. "This procedure does not require us to explicitly model the SLM pixel structure or the undiffracted light," said the team. "We simply need a camera that captures intermediate images of this iterative holography algorithm, which automatically optimizes the resulting phase patterns for both SLMs."

In trials the bench-top MH architecture was used to display several 2D and 3D holograms, including images of an insect and a bird. The demonstration showed that the dual-SLM holographic display with CITL calibration provided significantly better image quality than existing computer-generated hologram approaches. "Once the computer model is trained, it can be used to precisely figure out what a captured image would look like without physically capturing it," said Kim. "This means that the entire optical setup can be simulated in the cloud to perform real-time inference of computationally heavy problems with parallel computing."

The next steps include translating the bench-top setup into a system that could be built into a wearable AR or VR system, although the approach of co-designing hardware and software could be useful for improving other applications of computational displays and computational imaging in general, according to the team.

Source: Optics.org, February 2, 2021

|

Contact Us

|

Barry Young

|